How much bandwidth is enough?

Posted by Ryan Brown on Feb 19, 2019

How much bandwidth is enough? The instinctual answer, born from harsh experience always seems to be “more than is available”, but is this actually true? As with anything in IT, the answer is “it depends”. Fortunately, most of the key variables can be quantified.

The simplest and most obvious bandwidth-gobbling use case to examine is data protection. Data protection is a nice lumping term for backups, snapshots, clones, bare metal images, golden masters and disaster recovery. Essentially, it is a catch-all term that encompasses all of the copies of data we create, what we do with those copies, and why.

Data protection is a great example of high bandwidth-consuming services in part because the sheer volume of data generated by businesses is typically stated to exceed growth in drive sizes. It is also important because of the old axiom “if your data doesn’t exist in two places then it doesn’t exist”.

This axiom is a pithy means of getting across the importance of offsite backups and disaster recovery, but it misses a key part of that discussion: namely that humans should under no circumstances play a role in getting that data offsite. Humans are fallible. We forget to change tapes, fail to put the right tape in the bin for the courier or simply don’t pass along that job requirement to our replacements when we quit.

The above is a really long winded way of saying “in the real world, all data protection means sending copies of your data across the internet (or a dedicated WAN link) in an automated fashion”. The long-windedness is an attempt to impress the volume of the data being discussed: namely, every single bit of data an organization has created or will create.

The scope of the problem being understood, let’s delve into how we might calculate bandwidth requirements.

Compression maths

In order to calculate how much bandwidth you need for data protection you first need to know a thing or two about the applications and services you are proposing to use. The reason behind this is quite simply that not all applications or services are equal.

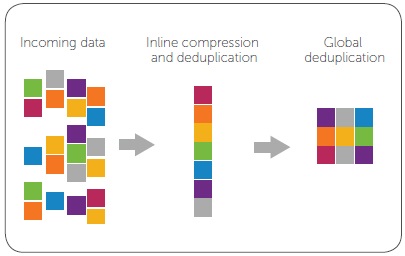

Most – but certainly not all! – data protection applications will do deduplication and/or compression. This can significantly lower the amount of data transmitted, but how they do this matters.

The simplest means of moving data would be to simply compress it and fire it over the WAN. Here you can expect see at best a 1.1x to 1.2x compression ratio for already heavily compressed files (like JPEGs) to a realistic 1.9x to 2.4x compression ratio for your “typical” collection of virtual machines.

Heavily duplicated traditional files (like multiple VDI clones, text documents, web servers and user profiles) can often see as high as 5x compression ratios in the real world. If you brush aside the marketing fluff, these are the sorts of numbers you’ll find real storage engineers working for the top storage companies using. To gauge installations of storage equipment that does inline compression.

All of that is fair enough, and not exactly controversial. Things get a lot more messy when we talk about deduplication, or when clones and snapshots are factored in.

Math fight

Deduplication can be done a lot of different ways. The straightforward method is to keep a checksum of each block of data you store. Every time a new block of data is supposed to be created you checksum it and compare it to your existing list of checksums.

If an identical data block already exists then you don’t write that data block to your storage. You simply add another pointer in the deduplication index’s metadata that says both the original block and new block reside at the same place.

This is fairly easy if you are going to maintain the stored blocks and the metadata all on the same device. It gets a lot more complicated when you start trying to do deduplication across a scale-out cluster of storage devices, multiple storage devices located in different geographical locations or across different storage devices from different manufacturers using different software and services.

A very simplified version might work as follows: a storage gateway may accept backups from multiple sources on a network and deduplicate/compress that data locally. It would then send metadata to the offsite service or mirror server which would respond with a list of storage blocks that it doesn’t currently have. The storage gateway would then send over only the required blocks.

How then to measure data savings? If, for example, I take a clone or snapshot of an existing virtual machine that clone or snapshot is nothing but metadata. It is all pointers to existing data blocks, at least until the data blocks of the original change. Despite this, that clone or snapshot is, for all practical purposes a fully functional copy of the original virtual machine.

In a more traditional backup system, I might have to actually create an entire copy of that clone or snapshot, compress it and fire it over the WAN. A deduplicated system is only going to send the new metadata. It doesn’t need to send the data blocks, as they already exist!

In this sense, if I take many snapshots or clones I could see outrageous headline data savings ratios. I’ve seen these range from 40x to 400x on various storage devices and data protection services.

Some people – especially marketing people working for storage companies whose devices don’t do deduplication – have a lot of problems with counting clones, snapshots or deduplication savings. To them, compression savings are the only “real” savings. If you dig too deep into conversations about data savings ratios for storage devices or data protection you will get caught in this rabbit hole and there is almost no way out of that endless debate.

Fortunately, it doesn’t actually matter in practice…though it is something to be aware of when talking to vendors about the capabilities of their products.

RTOs and RPOs in a compression-only environment

The software and storage hardware you own works the way that it works. Faffing about with what other things might or might not do is an interesting exercise come purchasing time, but we all know that there are a lot more factors at play than data savings ratios when choosing storage or data protection software.

Your Recovery Time Objective (how quickly you need to be able to restore from a failure) and your Recovery Point Objective (how many copies of the data you need to make, and how frequently) are really the key items you need to bear in mind.

If you have compression-only data protection tools then the shorter the RPO the more bandwidth you need. Short RPOs mean more copies taken more regularly. How many copies you retain is not really relevant, as the thing that will ultimately matter is how many of those copies have to be fired across the WAN.

In a compression-only environment, it’s safe to presume that you can get 2x data savings from compression. At this point you simply take the amount of data you are backing up, multiply be the number of copies sent off-site per month and you have an idea not only of the bandwidth you need (in order to send the requisite number of copies per month) but also the data caps you’ll need.

Remember to calculate at the month level, because many backups have different RPOs, so your daily, weekly and monthly amounts will differ. Also remember to allow for TCP overhead, which can be as high as 20% on congested links.

Bear in mind that your RPOs need to factor in the time it takes data to transmit. That data doesn’t “exist” from a data protection perspective until it exists in both locations.

RTO matters as well, but is more easily dealt with. If you have short RTOs then you need a local copy of your data, full stop. Anything else means your bandwidth requirements to meet RTOs will be much – much – higher than meeting RPO requirements.

I’d say this is true for most organizations. Excepting the smallest of the small, most companies would lose more money waiting for a VM to be dragged back over the WAN than they would just investing in a local storage unit to keep copies of backup data.

Just think about how long it takes you to resync your Dropbox account to a new system over residential ADSL (or patching Server 2003 from a clean RTM install). That can for many technologists be a matter of installing and then simply walking away and letting it do its thing for a day or more.

The offsite stuff is mostly disaster recovery. Even if you are a small business you must have disaster recovery. If your business plan in the event of your building burning down is to fold the company and move on, you’ll still need your data in order to satisfy the tax man.

The tax man, however, can be made to wait a few days in that situation. Disaster recovery for small businesses can be a slower affair. (Larger organizations do tend to need to fully light up their data sets with transparent failover.) Salesdroids needing that VM they borked back on lien in order to meet some absurd promise they’ve made to a customer will stand in your office and tap their foot impatiently until the restore is done.

Of course, deduplication changes things.

RTOs and RPOs in a deduplicated environment

In a deduplicated environment the rules change. Initial synchronizations take forever, but after that updates are fairly minor affairs. The question becomes not one of the total amount of data you need to copy, but the total amount of new data blocks generated.

Here, data block size matters, as does what is doing the deduplication. If you are relying on the storage system itself to do the deduplication it’s time to have a sit-down with your storage vendor about the data block sizes of your workloads and the data block sizes of your storage.

Deduplication on storage devices is all about making sure that the data occupies the smallest possible amount of storage. This doesn’t necessarily mean it is optimized for transiting the WAN.

Data protection software that is optimized for WAN traversal, for example, is usually willing to sacrifice disk storage space for WAN optimization by deduplicating variable block sizes, including blocks smaller than the storage size of the underlying hardware. WAN-optimized data protection software doesn’t care if the data takes up a few extra % of disk storage so long as it can save some WAN bandwidth.

This matters most in mixed-workload environments. Regular old NTFS workloads, for example, are frequently 4K blocks. Storage installed by vendors who are expecting standard windows applications to be run on their storage will optimize their storage for that size.

SQL, however, is frequently 64K blocks, and Exchange is usually 8K blocks. Many storage vendors will put in hardware designed to store (or at least deduplicate) at those block sizes for those workloads because this can make those workloads perform better.

Those units can eventually be used for mixed workloads, or repurposed from their original task to new workloads without anyone thinking about the block size setting. In a deduplicated environment knowing your storage and your workloads are both key to understanding what the real amount of data churn per month is.

Simple tools

Not everyone has a storage team. A lot – probably most – IT teams are generalists that rely on external consultants, VARs, MSPs or even just vendor sales people to get the job done. Fortunately, there are tools to help us make rough guesses about our needs.

Those who have deployed enterprise-level tools like VMware’s vCOPS should be able to get information on data churn easily enough. You can also to this manually by using the snapshot method: measure the snapshot space used over a period of time of a regular snapshot schedule. This can be done at a VM level or the level of an entire datastore (if you are doing your snapshots at the array).

One little known tool is the Microsoft Azure Cost Estimator Tool (https://www.microsoft.com/en-us/download/details.aspx?id=43376). This tool will take a look at your running infrastructure and provide you detailed information about what your VMs do.

Brushing aside its purpose as a migration tool to get your workloads into Microsoft’s public cloud, this tool is one of the simplest available to get real, hard numbers about an entire infrastructure’s worth of workloads. This data includes the amount of I/O for each workload. It has the ability to profile your infrastructure over a variety of timeframes.

The I/O measured by the Microsoft Azure Cost Estimator Tool isn’t write only, so you’ll have to have some understanding of your workload (and block size) to convert that information into something useful for bandwidth measurements, but it does provide an important bit of information other methods lack: a per-VM assessment of real world IOPS. If you are about to engage in regular backups (or change your backup frequency), it helps to know the IOPS load of each VM so that you can estimate whether or not your array (or your storage gateway) can handle the backup load.

Parting thoughts

Once you have some idea of your data churn you can make reasoned guesses about how much bandwidth you’ll need. You won’t need to replicate 100% of writes. That said, you also have to factor in network overhead to your calculations. In practice, I’ve found that these two things large come out in the wash such that if you know you can determine your data churn rate you are very, very close to the bandwidth you’ll need.

Be aware of seasonality. Many businesses are very seasonal. Measuring your data churn during the January lull can get you into a lot of trouble if you undersize your bandwidth and get slammed during the holiday rush!

Also remember to factor in growth. If you have records, use them. These records can be in the form of performance data in your hypervisor management tools, your analytics platform, your storage array or even just looking at how much storage you bought over the last few refresh cycles and making a broad-stroke guess.

My own personal experience is that our data churn is usually much smaller than we initially estimate, however, we make a lot more copies of our data than we realize. We back things up with scripts, various backup programs, then back up entire file servers or virtual infrastructures that contain not just the primaries but the copies as well.

The net result of this is deduplication is your friend. If you rely on compression alone then your bandwidth requirements will almost certainly exceed your ability to afford them. With deduplication, you have a fighting chance.

Investigate storage gateways that keep a local copy of the backups on your site and provide a global metadata for deduplicated blocks that spans the local and remote site. And above all remember: if your data doesn’t exist in more than one location, it does not exist.

Guest Post: Trevor Pott